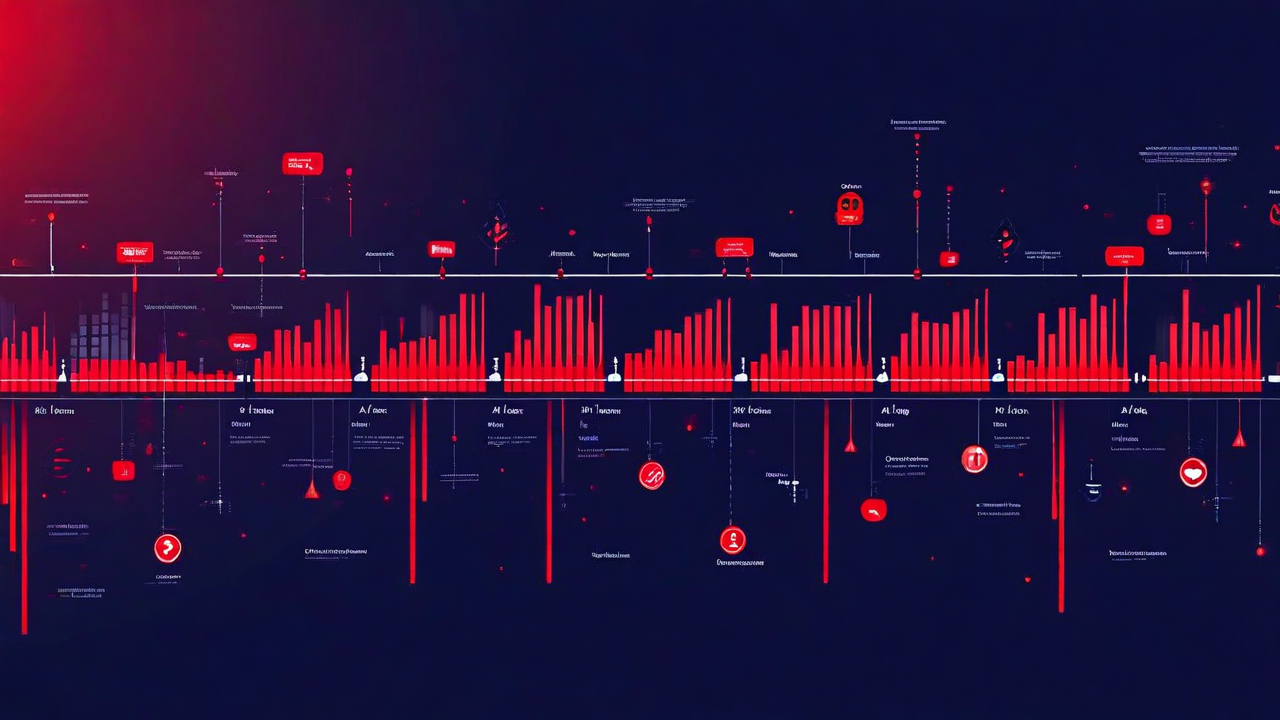

The Real Cost of API Downtime — Numbers From 30 Customers

Last quarter we ran a structured interview series with 30 of our customers who had experienced at least one significant API outage in the past twelve months. We asked them to walk through the timeline and enumerate every cost — engineering time, support burden, revenue impact, customer churn, and the stuff that doesn't show up in any postmortem: the political cost of losing trust with a large enterprise client.

What we found was consistent enough to be uncomfortable. Direct revenue loss was real but usually the smallest item. The bigger costs were almost always invisible in the moment.

The Numbers

The median outage in our sample lasted 47 minutes. The range was 8 minutes to 6.5 hours. Incidents under 15 minutes almost universally went undetected until a customer reported them — 22 of the 30 companies said they had no automated detection that would have caught a sub-15-minute partial failure.

Direct revenue loss across the sample averaged $14,800 per incident for companies with transaction-based revenue. For companies selling SaaS subscriptions, the direct per-incident figure was lower — around $3,200 — but the downstream churn effect was significantly higher. Four companies in the sample reported losing an enterprise contract renewal within 60 days of a major incident. Those contract values ranged from $85,000 to $340,000 annually.

Engineering cost was the biggest consistent number. The average time from first alert to resolved + documented was 4.2 hours of senior engineer time. At loaded cost, that's $800-$1,200 per incident depending on team location and seniority. Multiply by the number of incidents — most companies in the sample were averaging 3-5 per quarter — and you're looking at $10,000-$20,000 per year just in engineering overhead, before you count the other costs.

The Costs Nobody Accounts For

Support ticket volume spiked during every incident in the sample. The average was 34 inbound support contacts per hour of outage time. At $18-$25 per ticket to resolve (fully loaded cost), a two-hour outage generates $1,200-$1,700 in support cost alone.

But the number that most teams haven't tried to measure is partner trust erosion. Eight companies in our sample had a significant enterprise client or technical integration partner who either downgraded their usage or started evaluating alternatives after an incident. None of those eight quantified it in their postmortem. They mentioned it in interviews when we asked open-ended questions about business impact.

The pattern: enterprise clients will often give you one high-visibility incident before it changes their risk assessment. B2B companies are particularly vulnerable because their clients' engineers are watching reliability metrics and reporting to their own management. One bad incident becomes a slide in their quarterly review. Two incidents become a vendor risk conversation.

Where the Detection Gap Is

We asked everyone where their monitoring failed them. Three patterns showed up repeatedly.

First: uptime checks that hit a health endpoint rather than exercising real business logic. Your /health endpoint returning 200 while your payment processing endpoint is timing out is not a monitoring success — it's a monitoring illusion. Twelve of the 30 companies described some version of this failure mode. Health endpoints should exercise real dependencies, not just return a hardcoded status.

Second: alert thresholds set too high to catch slow degradation. Most incidents don't start as a complete outage. They start as a p99 latency increase that gradually becomes a p95 problem that eventually starts producing errors. Teams that set their alert threshold at "error rate > 5%" miss the preceding 45 minutes where things were quietly going wrong and getting worse. By the time the alert fires, you're in a crisis. Earlier detection means you can often intervene before customers notice.

Third: no per-endpoint observability. A service-level error rate of 0.2% might look fine in aggregate, but if it's concentrated on one endpoint — say, your webhook delivery endpoint — that endpoint might be failing 30% of the time. Aggregate metrics hide per-endpoint failures. Several teams only discovered this after reviewing API gateway logs for a postmortem.

What Actually Reduces Cost

Across the 30 companies, the ones who reported lower incident costs shared three things. They had synthetic monitoring running against production every minute. They had separate alerts for different error rate thresholds — not just "is it broken" but "is it degrading." And they had a runbook for the three most common failure modes, reviewed and tested in the past 90 days.

The runbook thing sounds obvious but almost nobody does it well. Having a runbook written six months ago that nobody has reviewed isn't much better than not having one. The value is in the review process — running through the steps quarterly surfaces the places where your infrastructure has drifted from what the runbook describes.

Time to detection is the metric that matters most. Every minute between when an incident starts and when you know about it is a minute of customer impact you're not mitigating. The companies with the lowest total incident costs weren't the ones who had fewer incidents — they were the ones who found out about them faster.

Detect API problems before your customers do

APIForge runs synthetic endpoint monitoring every minute against your production APIs. Set granular alert thresholds per endpoint, get notified in Slack or email, and keep a full incident timeline for postmortems.

Start Free